Separating Operational Reality From AI Hype

Healthcare leaders are investing in AI but results vary widely. Some use cases deliver measurable value, while others introduce risk and complexity.

Download Free Guide

Why AI Success in Healthcare Is Inconsistent

Where AI Delivers Real Operational Value

AI works best in high-volume, pattern-driven, repeatable workflows where data consistency exists and decisions can be supported by historical trends.

Where AI Struggles or Should Not Be Used

How Leaders Should Evaluate AI Use Cases

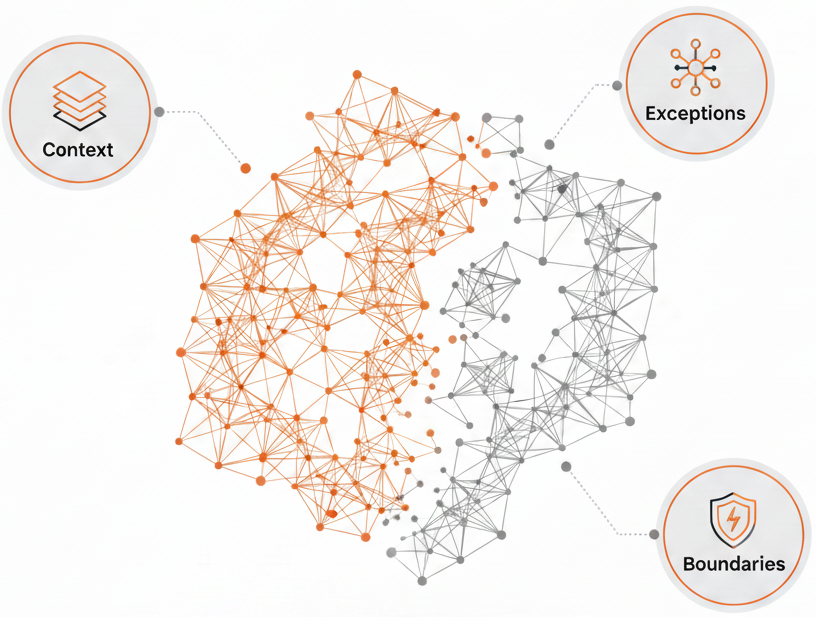

Successful organizations evaluate AI through:

What’s Inside the Gated Executive Brief

This resource provides: